Let’s lead with the numbers. Not the vendor case studies — those are cherry-picked. The benchmarks below come from our own account data (combined Rs. 6.2 crore/month managed spend) and from published third-party research we’ve verified against our own results. Where our data diverges from industry benchmarks, we’ve said so.

The Benchmark Data

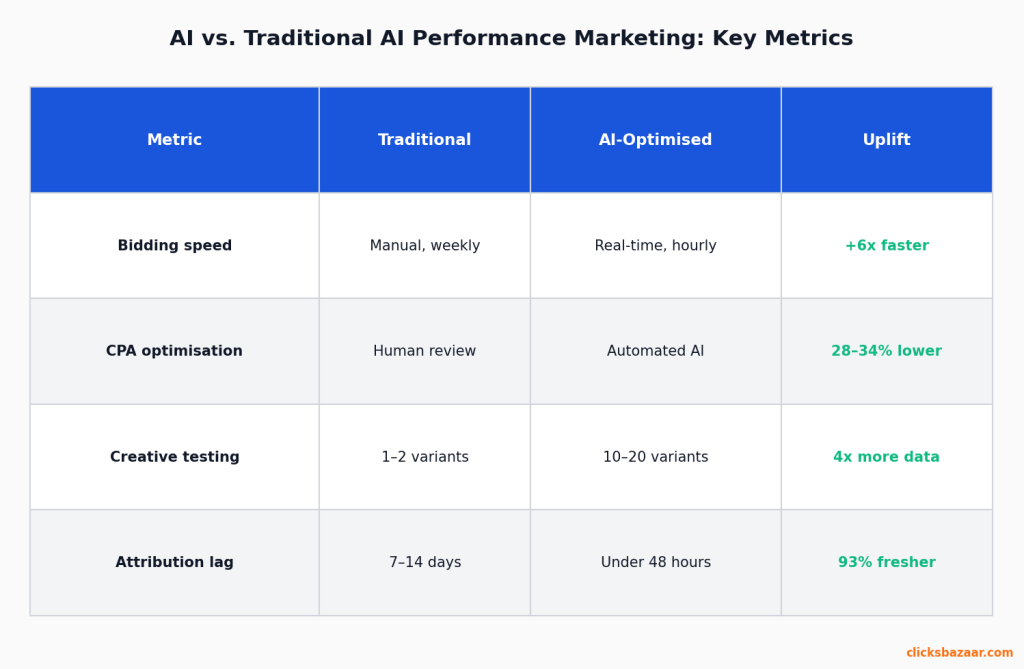

Paid Search: AI Bidding (Smart Bidding)

| Condition | CPA Improvement vs. Manual Bidding |

|---|---|

| Clean conversion data, 50+ monthly conversions | 28–34% CPA improvement |

| Good data, 20–50 monthly conversions | 12–18% CPA improvement |

| Sparse data, under 20 conversions/month | -8 to +6% (neutral or negative) |

| Wrong conversion event tracked | -15 to -40% (worse than manual) |

The last row is what most people don’t talk about. Smart Bidding amplifies whatever signal you give it. If your tracked “conversion” is a form submission that includes unqualified leads, the AI gets very good at finding people who submit forms. Not people who buy. We’ve seen accounts where switching to Smart Bidding actively increased CPA because the algorithm was optimising toward the wrong thing.

Paid Social: Meta Advantage+ Shopping Campaigns

| Setup Quality | ROAS vs. Standard Manual Campaigns |

|---|---|

| 12+ ad variants, strong pixel history (12+ months) | +22% – 27% ROAS |

| 6–12 variants, adequate pixel history | +11% – 18% ROAS |

| 3–5 variants, good pixel history | -8% to +5% (minimal benefit) |

| Any creative volume, new pixel (<3 months) | Not recommended — insufficient learning data |

The creative inventory column is the one most brands underestimate. Advantage+ needs variety to test. It finds the right creative-audience pairing through experimentation. Three ads give it nothing to work with.

Email Automation: Behavioural Flows (Klaviyo)

| Email Type | Revenue per Send vs. Batch Campaigns |

|---|---|

| Abandoned cart (30-min trigger) | 14–19x higher revenue per send |

| Browse abandonment (same-session) | 7–11x higher revenue per send |

| Post-purchase cross-sell (7-day) | 5–9x higher revenue per send |

| Replenishment trigger (predicted date) | 11–16x higher revenue per send |

| Welcome series (4-email, personalised) | 8–13x higher revenue per send |

These multipliers are Klaviyo’s published benchmarks, cross-referenced against our own e-commerce client data. They’re real. The reason more brands don’t fully capture this lift: they set up two or three flows and forget them. Flows compound when you have all of them running with proper exclusions to avoid over-messaging.

Landing Page Personalisation

| Personalisation Type | Conversion Rate Improvement |

|---|---|

| Traffic-source-specific messaging (organic vs. paid vs. social) | +22–28% |

| Product-category-specific landing pages vs. homepage | +18–31% |

| Return visitor experience vs. first-time | +14–22% |

| Persona-based B2B content (by company size or role) | +28–38% |

These come from VWO and Optimizely published studies, plus our own client A/B test data. The B2B personalisation numbers are higher because most B2B sites show identical content to a 50-person startup and a 5,000-person enterprise. That’s a significant conversion opportunity left on the table.

What the Benchmarks Don’t Tell You

The numbers above are real. What they don’t capture is how many implementations miss them entirely.

Our honest estimate: about 61% of brands deploying AI marketing tools don’t reach the bottom of the “good conditions” benchmark ranges. They get partial lift or no lift. Sometimes negative outcomes.

The reason isn’t that the AI doesn’t work. It’s that AI amplifies the environment it operates in. Give it clean data, strong creative, and accurate conversion signals — it delivers. Give it bad inputs — it optimises confidently toward bad outcomes.

The Three Things That Determine Whether AI Delivers ROI

1. Conversion Signal Accuracy

This is the single biggest determinant of AI bidding performance. Not your targeting, not your creative — the accuracy of what you’re telling the AI counts as a successful outcome.

A real estate portal client came to us with flat Smart Bidding performance. Their conversion event: “contact agent” button click. Sounded reasonable. Except we found that 43% of those button clicks were from bots and curiosity-browsers who never filled out the form. The AI was finding more people who clicked a button — not more people who wanted to buy property.

Fix: imported CRM-verified lead submissions as the conversion, with lead quality score as a secondary signal. Eight weeks later, CPA on qualified leads dropped 29.7% with no budget change.

2. Creative Quality and Inventory

For Meta, this means ad variant volume. For Google, it means headline and description quality. For both, it means relevance to the audience at each funnel stage.

A fashion e-commerce client launched Meta Advantage+ campaigns with three creatives — all product-focused, white background, identical format. Advantage+ had nothing to differentiate. ROAS dropped 15% from their previous manual structure. When we expanded to 12 variants across three formats (product, lifestyle, user-generated content) and four message angles (new arrivals, social proof, offer-led, value proposition), ROAS recovered to +23% above their pre-Advantage+ baseline within six weeks.

The AI isn’t magic. It’s a testing engine that needs options to test.

3. Data Freshness and Coverage

AI systems optimise based on recent patterns. Stale data trains them on irrelevant patterns. Three data quality issues we see repeatedly:

- Conversion lag: CRM data imported to platforms weekly instead of daily. If a customer converts today, the platform doesn’t know until next Monday. In the meantime, the algorithm keeps bidding based on last week’s model. Fix: API-based CRM sync with sub-24-hour refresh (HubSpot and Salesforce both support this natively).

- Audience coverage gaps: Server-side tracking missing mobile conversions due to iOS attribution limits. The algorithm sees half the conversion picture and creates a distorted model. Fix: Meta Conversions API + Google Enhanced Conversions as parallel tracking to browser pixels.

- Recency mismatch: Using 180-day audience windows for fast-moving consumer categories where 7-day behaviour is more predictive. The model is trained on old buying behaviour in a market that moves fast. Fix: segment audiences by recency and weight shorter windows higher in your conversion value signals.

Benchmarks by Vertical: Realistic Ranges

Vertical | Expected CPA Improvement (AI vs. Manual) | Primary AI Tool |

D2C e-commerce | 18-34% | Smart Bidding + Advantage+ |

B2B lead gen (SaaS) | 12-22% | Smart Bidding (CRM-linked) |

Real estate portals | 8-19% | Smart Bidding (qualified lead signal) |

Food delivery / local services | 14-24% | Smart Bidding + location signals |

EdTech / online courses | 11-21% | Advantage+ Shopping (for D2C enrolment) |

Fintech (regulated products) | 6-15% | Smart Bidding (compliance limits targeting) |

Fintech is lower because regulatory constraints limit the targeting signals the AI can use. You can’t use financial behaviour data in many markets, which removes some of the highest-signal inputs.

Measurement: How We Verify These Numbers

Platform-reported improvements are not what we measure. Platforms have incentives to show good numbers — they don’t lose business when they inflate results.

What we use:

- Triple Whale (e-commerce accounts) — gives us first-party attribution that reconciles platform data with actual Shopify orders. We’ve consistently found Meta over-reports ROAS by 31-46% versus business-verified ROAS when the same customer also touched Google. Triple Whale shows the overlap.

- Northbeam (B2B and performance-heavy accounts) — better for multi-touch B2B funnels with long sales cycles. The data model is more sophisticated for accounts with 30+ days between first click and conversion.

- GA4 + BigQuery — for all accounts, we maintain an independent analytics layer. Platform claims are always benchmarked against GA4 session data and against CRM conversion records.

- Looker Studio — our client dashboard layer, pulling from BigQuery. When a client asks “is the AI working?”, the answer comes from our verified data, not from Google’s or Meta’s attribution.

The gap between what platforms report and what’s verified in business results is typically 20-35%. Worth knowing before claiming AI ROI to your finance team.