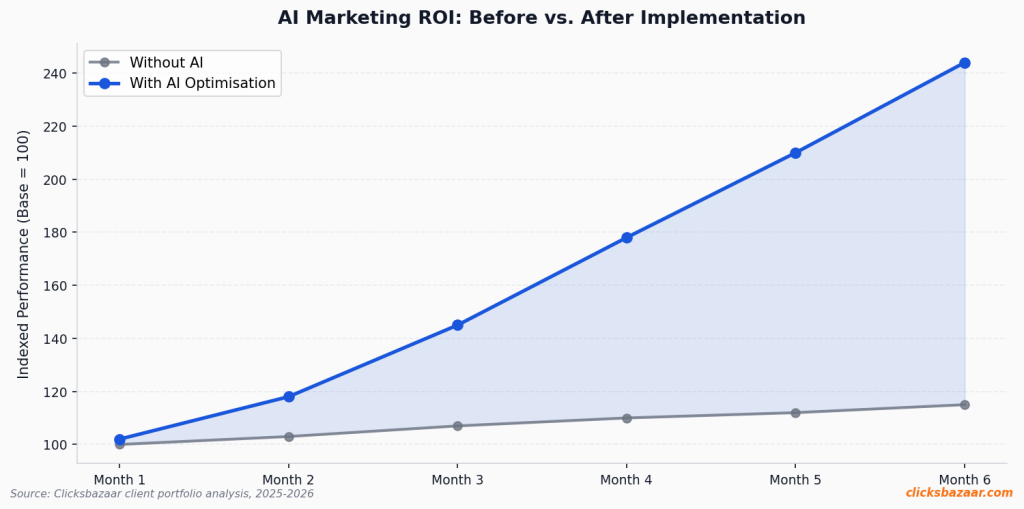

Last quarter, a D2C skincare brand came to us with a fairly standard growth problem. Rs. 2.3 crore in monthly ad spend across Google and Meta, decent ROAS, but the ops were killing them. Four people managing 60+ active campaigns. Weekly reporting alone was eating 11 hours. Their instinct was to hire two more people.

We talked them out of it.

Six months later, they’re running 90+ campaigns with the same team — and their blended CPA dropped 31.4%. Not because they hired smarter. Because they stopped doing things that a well-configured algorithm does better.

That’s the actual story of AI in performance marketing right now. Not the hype version.

The Work That’s Eating Your Team (And Shouldn’t Be)

Here’s something most agencies won’t tell you: a huge percentage of what a mid-level performance marketer does every day is genuinely low-leverage. Not because they’re bad at their job. Because the nature of paid media has changed.

Bid adjustments. Audience list refreshes. Budget reallocation between campaigns that are above or below target. Creative rotation when frequency climbs. Pulling reports that everyone skims and nobody acts on until Friday. These tasks used to require human judgment because the data was slow and the tools were dumb. Neither is true

anymore.

Google’s Smart Bidding is evaluating 70+ auction-time signals — device type, browser, time, location, search query intent, remarketing list membership, predicted conversion probability — in real time, at every auction. Your analyst doing a bid pass at 9am isn’t competing with that. They’re working from yesterday’s data and making maybe 200 decisions in a 4-hour session. Smart Bidding makes millions.

The math just doesn’t work in humans’ favour at that layer.

But — and this is the part most AI-enthusiasm pieces skip — that’s only about 60% of what actually needs to happen. The other 40% is still very human. Strategy direction. Creative judgment. Deciding when the algorithm is learning something wrong and needs to be corrected. Reading pattern shifts that haven’t yet shown up in the conversion data. That work isn’t going anywhere.

The brands that scale well in 2026 are the ones that have figured out exactly where the line is.

What We Actually See When Automation Goes Right

The most consistent result we see when a brand implements automation properly is not just efficiency — it’s creative output. Teams that aren’t doing manual bid management have time to make better ads. More variants, faster tests, tighter feedback loops between what the platform is seeing and what creative goes live next.

That’s where the real performance gains live. Not in micro-optimising bids. In getting more signal about what creative actually resonates.

One client — B2B SaaS, lead-gen focused, about Rs. 85L/month in spend — went from

6 active ad variants to 38 in a 90-day period after automating their campaign management layer. Their cost per qualified lead dropped 23.7%. The team size was unchanged. What changed was how they were spending their time.

What automation took off their plate:

- Daily bid review and adjustment (previously 3.5 hrs/week)

- Budget pacing checks and reallocation (2 hrs/week)

- Frequency monitoring and creative pausing (2 hrs/week)

- Weekly performance reporting (6 hrs/week)

That’s 13.5 hours/week redirected to creative strategy and testing. Over a quarter, that’s a meaningful shift in what the team is actually building.

The Three Layers — And Why Most Brands Only Build One

We think about AI marketing infrastructure in three layers. Most brands implement the first, skip the second, and never get to the third.

Layer 1 — Signal Quality

Everything in AI-driven marketing depends on what you’re feeding the algorithm. If your conversion tracking is misconfigured, Smart Bidding optimises toward the wrong thing. We’ve seen brands accidentally training bidding algorithms on add-to-cart events instead of actual purchases — and watching CPAs balloon by 60% before anyone figured out why.

Server-side tagging, CRM integration, first-party audience building — this isn’t glamorous work. But it’s foundational. Skip it and every automation layer above it underperforms.

Layer 2 — Execution Automation

This is what most people mean when they say ‘AI marketing.’ Smart Bidding on Google, Advantage+ Shopping on Meta, Performance Max, programmatic DSPs handling delivery optimisation. Rules-based automation for creative rotation, budget caps, audience exclusions. The day-to-day operations layer.

Built correctly, this layer runs without daily human input. You set the guardrails — target CPA, budget floors and ceilings, frequency caps — and the system operates within them continuously. Check in weekly, not daily.

Layer 3 — Insight and Direction

This is where the competitive edge actually comes from, and it’s the layer brands most often neglect. Using AI outputs to surface patterns, inform creative strategy, identify audience segments worth testing, predict which campaigns are likely to hit learning limits before they do.

The marketers winning right now are the ones who can read what an algorithm is telling them and make fast, high-quality decisions from that signal. That’s not a tool skill. It’s a

judgement skill.

What Actually Goes Wrong

We’ve seen this fail in predictable ways. Three patterns come up over and over.

Automating before the data is clean. The algorithm is only as smart as what you feed it. Agencies that skip the tracking audit and go straight to Smart Bidding on campaigns with fragmented conversion data usually end up with inflated conversion numbers and disappointed clients. Sort the data foundation first. Always.

Going hands-off too fast. Automation needs oversight, not management. There’s a difference. A campaign that gets stuck in learning phase, a creative that gets flagged for policy violation mid-flight, an audience exclusion that inadvertently drops — these happen. Weekly reviews with clear anomaly thresholds catch them before they become expensive. Daily management doesn’t scale; weekly accountability does.

Underinvesting in creative while over-investing in optimisation. This one stings a bit because it’s so common. Brands will spend weeks perfecting their bidding strategy and then run the same three creatives for four months. AI can optimise delivery of weak ads extremely efficiently. The results are still bad. More creative testing, not less, is the correct investment when you free up time from manual campaign management.

The 30-Day Starting Point

If you’re currently at Rs. 50L/month or above in ad spend and you want to start shifting toward this model, here’s the honest order of operations:

- First two weeks: do the unsexy tracking audit. Verify your platform-reported conversions match your CRM. Fix discrepancies. Set up server-side tagging if you haven’t. Yes, this is boring. Do it anyway.

- Weeks two through three: switch your highest-volume, highest-conversion campaigns to Smart Bidding (tCPA or tROAS). Set your targets 15% more conservative than your current performance. Give the algorithm room to learn.

- Weeks three and four: implement automated rules for creative fatigue on Meta. Pause automatically when frequency exceeds 3.2 and CTR has dropped 20% from the ad’s first-week baseline. Monitor what gets paused.

End of month one, review what you’ve freed up. For most accounts at this spend level, it’s 10-15 hours per week. Then ask: what would you build with that time if you were being ambitious?

That’s where the real conversation starts.

A Note on What This Isn’t

This isn’t a pitch for automation replacing expertise. It’s the opposite. The more the execution layer gets automated, the more valuable genuine strategic and creative expertise becomes. The brands that win are the ones with people who understand the systems well enough to set them up correctly, interpret their outputs clearly, and know when to override them.

Smaller team, higher leverage. That’s the actual unlock.

Quick Checklist Before You Start

- Verify conversion tracking matches CRM data — fix any gaps first

- Confirm you have 30+ conversions/month per campaign before enabling Smart Bidding

- Set up server-side tracking to reduce signal loss from browser privacy changes

- Define budget floors and bid caps before handing campaigns to automation

- Build a first-party audience strategy not dependent on third-party cookies

- Create an automated creative fatigue rule on Meta (frequency + CTR decay)

- Commit to weekly reviews instead of daily — let algorithms complete learning cycles

- Reinvest freed time into more creative variants and faster testing cycles

- Set anomaly thresholds: flag any campaign spending 30%+ above target CPA automatically

- Review automation performance at 30/60/90 days and adjust guardrails accordingly